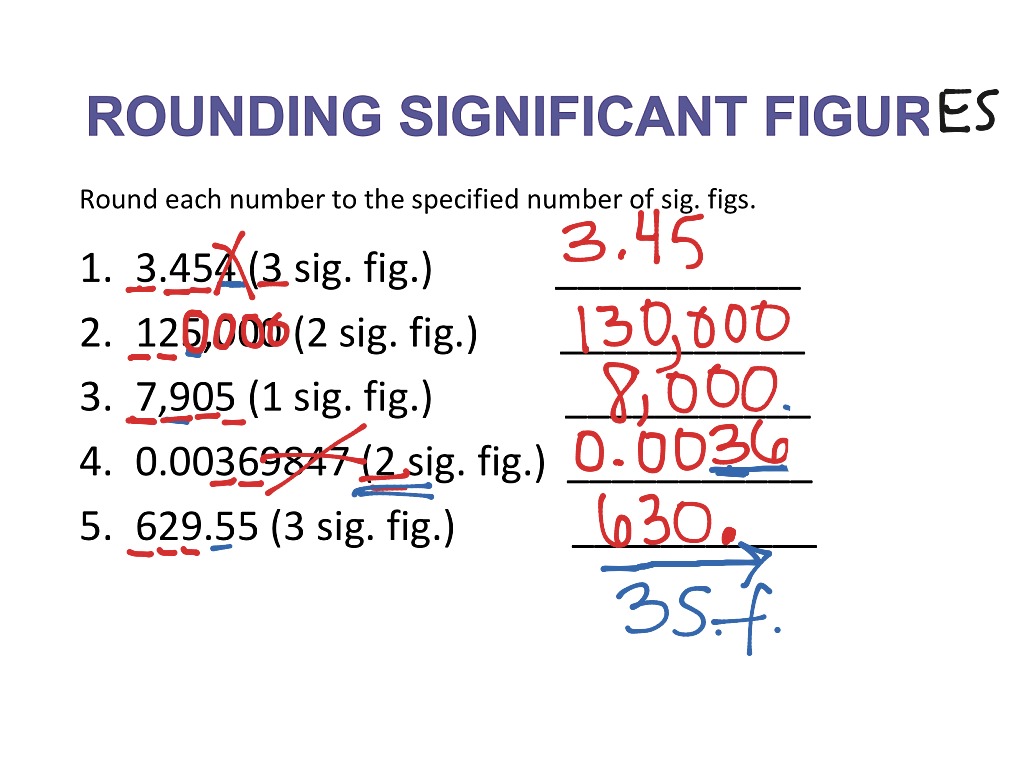

In fact, once we reach six groups, the probability of us getting a false positive is actually above 50%! Notice that the family-wise error rate increases rapidly as the number of groups (and consequently the number of pairwise comparisons) increases. The following table illustrates how many pairwise comparisons are associated with each number of groups along with the family-wise error rate: If we have more than four groups, the number of pairwise comparisons we will want to look at will only increase even more. This means there are a total of six pairwise comparisons we want to look at with a post hoc test:Ī – B (the difference between the group A mean and the group B mean) Thus, when we conduct a post hoc test to explore the difference between the group means, there are several pairwise comparisons we want to explore.įor example, suppose we have four groups: A, B, C, and D.

When we conduct an ANOVA, there are often three or more groups that we are comparing to one another. 05, the probability that we get a false positive increases to beyond just 0.05. The more dice we roll, the higher the probability that one of the dice will land on a “1.” Similarly, if we conduct several hypothesis tests at once using a significance level of. If we roll five dice at once, the probability increases to 22.6%. But if we roll two dice at once, the probability that one of the dice will land on a “1” increases to 9.75%. The probability that the dice lands on a “1” is just 5%. However, when we conduct multiple hypothesis tests at once, the probability of getting a false positive increases.įor example, imagine that we roll a 20-sided dice. When we perform one hypothesis test, the type I error rate is equal to the significance level, which is commonly chosen to be 0.01, 0.05, or 0.10. when we claim there is a statistically significant difference among groups, but there actually isn’t. In other words, it’s the probability of getting a “false positive”, i.e.

In a hypothesis test, there is always a type I error rate, which is defined by our significance level (alpha) and tells us the probability of rejecting a null hypothesis that is actually true. The Family-Wise Error RateĪs mentioned before, post hoc tests allow us to test for difference between multiple group means while also controlling for the family-wise error rate. If the p-value is not statistically significant, this indicates that the means for all of the groups are not different from each other, so there is no need to conduct a post hoc test to find out which groups are different from each other. Technical Note: It’s important to note that we only need to conduct a post hoc test when the p-value for the ANOVA is statistically significant. In order to find out exactly which groups are different from each other, we must conduct a post hoc test (also known as a multiple comparison test), which will allow us to explore the difference between multiple group means while also controlling for the family-wise error rate. It simply tells us that not all of the group means are equal. However, this doesn’t tell us which groups are different from each other. If the p-value from the ANOVA is less than the significance level, we can reject the null hypothesis and conclude that we have sufficient evidence to say that at least one of the means of the groups is different from the others. The alternative hypothesis: (Ha): at least one of the means is different from the others The null hypothesis (H 0): µ 1 = µ 2 = µ 3 = … = µ k (the means are equal for each group) The hypotheses used in an ANOVA are as follows: An ANOVA is a statistical test that is used to determine whether or not there is a statistically significant difference between the means of three or more independent groups.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed